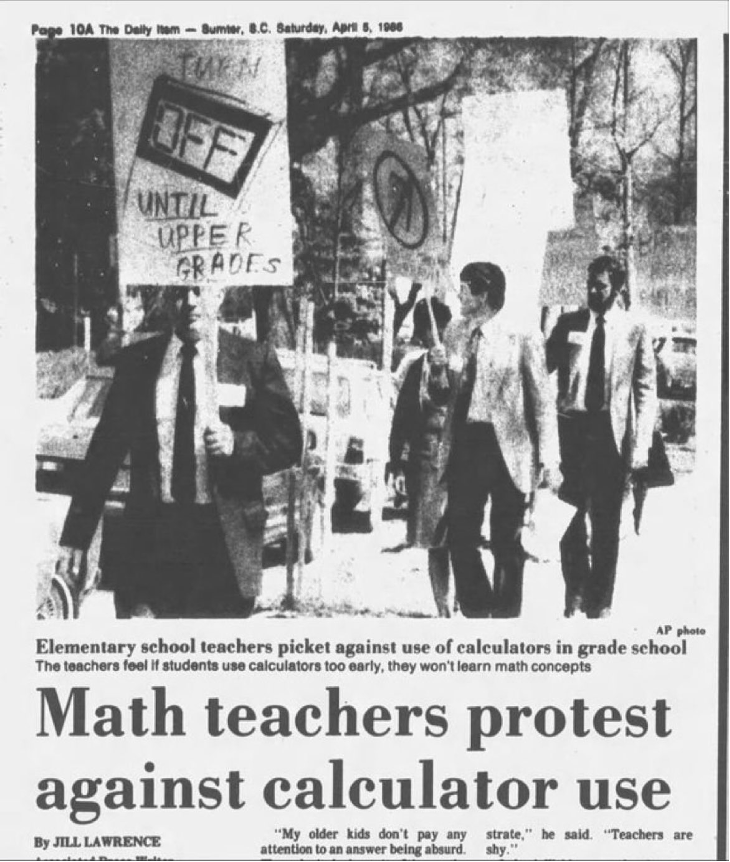

Every era thinks it’s teetering on the edge of something revolutionary; every era also finds reasons to step back. Printing presses were accused of ruining memory, erasers of encouraging mistakes, calculators of killing math, and the internet of replacing thinking with clicking. Today, AI tutors take their turn in the dock. The artefacts change; the reflex endures.

A short tour of resistance (and why it recurs)

In classrooms and workplaces, pushback is rarely irrational. It encodes uncertainty about norms, skills, incentives, and unintended consequences.

| 1440: Gutenberg’s printing press sparked criticism, with academics fearing that printed books would reduce memorization and critical thinking. 1980: The advent of the pencil eraser was seen as an incentive to make mistakes and a threat to careful writing. 1986: Protests against the use of calculators, arguing that students would forget how to do mental calculations. 2000: Criticism of internet access in classrooms, for fear of misinformation. 2010: Resistance to the use of smartphones and tablets in the classroom, with concerns about distractions and decreased social interaction among students. 2025: Resistance to AI tutors as easy-task.ai. |

Everett Rogers’ Diffusion of Innovations gives us a durable lens: innovations spread through social systems over time, and their adoption depends on five perceived attributes: relative advantage, compatibility, complexity, trialability, and observability; moving in waves from innovators to laggards along a characteristic S‑curve.

| “Academic research involves three steps: finding relevant information, assessing the quality of that information, then using appropriate information either to try to conclude something, to uncover something, or to argue something. The Internet is useful for the first step, somewhat useful for the second, and not at all useful for the third.” – By Beth Stafford in Brabazon, Tara (2007). The University of Google, Ashgate (UK), pp.22. |

What this means for AI in Indian education

Policy has set the table: NEP 2020 positions AI as a lever for learning, assessment, and administration; recent discussions propose Centres of Excellence to build institutional capacity. But diffusion won’t hinge on policy documents alone. Teachers and administrators adopt when AI clearly saves time, fits curricula and local languages, feels simple and trustworthy, can be piloted safely, and shows visible gains in student work.

Reviews of AI‑in‑education in India underline the same arc: real promise (personalization, admin efficiency), real constraints (infrastructure, capacity, equity, ethics). The practical path is micro‑pilots, transparent evaluation, and teacher‑led exemplars. Start small, show what changed, let peers observe, then scale. For scenario‑based futures and implementation pathways, see AI Scenarios on my site: r‑srini.in/2026/01/18/ai‑scenarios/

And for Indian industry: beyond pilots to redesigned work

Indian firms are enthusiastic adopters; many already use AI and most plan to expand. Globally, however, even frequent users often stall between pilots and enterprise‑level impact. Crossing that gap demands workflow redesign (not bolt‑on tools), sound data governance, and credible line‑of‑sight to P&L. Executives need proof of advantage, confidence in compatibility with systems and compliance, lower complexity via guardrails and usable interfaces, safe trialability through sandboxes, and peer observability via references.

Public investment, from compute to Centres of Excellence, helps the ecosystem, but diffusion inside firms still hinges on these attributes. High performers are already reframing AI from cost‑cutting to growth and innovation, a signal the early majority watches closely.

Three moves that convert resistance into readiness

- Stage it: Run bounded pilots that teachers/managers own; publish before‑after evidence others can observe and adapt. (Trialability, observability)

- Lower friction: Invest in training, vernacular UX, and clean data pipes; perceived complexity kills diffusion faster than controversy. (Complexity, compatibility)

- Prove advantage: Tie AI to concrete outcomes—recovered teacher time, reduced cycle times, new revenue lines—tracked publicly. (Relative advantage)

Bottom line: Resistance isn’t the enemy; it’s information. Use it to make AI useful, legible, and locally credible; the conditions under which social systems adopt innovations.

Cheers.

(c) 2026. R. Srinivasan.