As I began reflecting on my earlier posts on You are intelligent: have you done something dumb? and Judgments, I rolled back to this classic article, so beautifully titled, Hindsight ≠ foresight. Written by B Fischhoff, way back in 1975, this paper provides two intuitive results (at least in hindsight): once the outcome of an event is known, people associate higher probability of its occurrence; and people were unaware that they have been influenced by hindsight (the knowledge of what actually happened).

Case class in a business school – hampered by hindsight bias

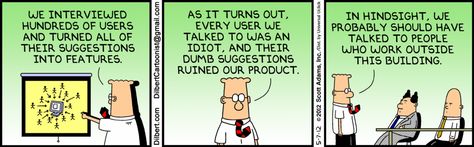

Take a business school case class for instance. As a case teacher, I face this a quite a lot of times. In a typical strategy cases, the primary question to the class is “what should the company do?” And since most cases are set a few years in the past, simple Internet search (by the students as part of their class preparation) would have informed them about what had actually happened. Given that students come into class with this hindsight, they try very hard to fit their preparation and theoretical arguments to the actual outcome, however irrational, or improbable it might have been. A good management teacher ought to therefore provide for this “hindsight bias” in students and ensure that a fair discussion happens in class on all possible outcomes.

Should I therefore, as a management teacher, provide my students with only cases for which the outcomes were not known? What therefore are my criteria to choose cases for a class? Do I fight to eliminate this “hindsight bias”? Let me come back to this later.

An air-crash investigation

Let us take another example. A special team has been tasked with investigating the cause of an air crash. Any investigation of an accident would inevitably entail putting together pieces of information to arrive at a causal relationship between the antecedent factors and the event, which is known to have happened. It is impossible to eliminate the source of bias here, the event. The investigation team has to be trained to create a counter-factual (good) outcome from the evidence at hand. They need to recreate the antecedents to the event in a manner that they evaluate if anyone in their place would have made the same decisions as the actors (pilots and crew who made certain decisions) did. Experimentally, it is akin to creating a control-group that knows all the facts leading up to the case, but not the actual outcome.

Investigating white-collar crime

In the case of white-collar crime, especially when it involves financial fraud, another significant factor interferes with hindsight bias, the size or impact. Larger frauds are fraught with more pronounced biases. Media coverage on “select” white-collar crimes are testimony to such biases. Nicholas Bourtin (read the article here), adds how armed with hindsight bias, financial crime investigators might ascribe malicious intent to even innocent mistakes or poor judgement.

As I was thinking about this issue, I just saw the breaking news of an earthquake of 7.2 magnitude hitting the Iraq-Iran border (Sunday, 12th November 2017). Hopefully, there isn’t much damage. And it triggered a thought.

Fighting hindsight bias – learning from geophysics

A great learning for fighting hindsight bias comes from geophysical studies. Imagine how geologists and geophysicists study earthquakes and volcanic eruptions. These are events that just “happen”, and then the scientists “reconstruct” the events through carefully collected data. Can we learn something from the way they fight hindsight bias? Sure.

Strategy #1: Conduct stability studies. Not just fault studies, but stability studies. Take the context of highly quake-prone areas, and go study why earthquakes aren’t happening! Such data would provide the ‘normal’ distribution of data with the occurance of earthquakes being the outliers.

Strategy #2: Broaden the search. Take all retrospective data for analysis. Study all the quakes that happened on a plate/ all eruptions of a volcano. Such events may occur very infrequently, and may be randomly distributed over time. However infrequent they may be, it would be worthwhile to study the antecedent conditions every time. Maybe, one can find a cause-effect relationship. Like concluding that most road accidents happen between 2.00am and 4.30am because there is a high likelihood of drivers sleeping behind the wheel (that is if they are still being driven by human drivers!).

Strategy #3: Combine the two, and seek patterns. Conduct stability studies and say why events do not happen, and conduct (with big data) longitudinal studies to infer why events do happen. Combine the two and create patterns. Such patterns can be immensely helpful in studying antecedents of events, and effectively fighting hindsight bias.

Fighting hindsight bias – applying it in managerial judgement

Straight, let us try and apply the learning from geo-physics to managerial judgement. First, consider prior probabilities of an event happening appropriately. Imagine an angry boss (no, I would like to believe that all bosses are not always angry!). In trying to understand what angered her today, use prior probabilities appropriately. She may be angry because she was being held accountable for something beyond her control (like your productivity), or just that she gets angry when she is frustrated about not being able to communicate or convince others. Strategy #1: ask yourself, when is she ‘not angry’? She is not angry when you complete your work on time, when you present your work properly (as she likes it), and when your work is of good quality. Then why is she angry today? You have the answer.

Second, stop thinking sample size and probability. Unless you have a really large sample size of such events, stop thinking about probability. Imagine predictions in sport or financial services. I was taught in my first finance classes, “past performance is not an indication of future performance”. And my brief indulgence with sports tells me that the law of averages is that “sustained good performance does not last long”. Would you be confident in predicting the goals scored by a football team if their prior performances were [4-1, 3-0, 5-2, and 1-0] or [1-4, 4-2, 2-2, 1-0] with the second number in each pair representing the goals scored by the opposition? Most would be confident of predicting the performance of the former scoring pattern than the latter. It might just happen that the next game is against the league leader (including someone with initials of CR7) and all these performances do not matter at all. You really need to collect loads of data on each team’s performance, including historical performances of all the opposition teams before you make any predictions.

Third, stay away from causal relationships (no I did not say casual relationships!), unless you have really “big data” on both the normal distribution of the event not happening, as well as the outlier chance of the event happening. Remember the wonder batsman, Pranav Dhanawade, the 17-year old kid who scored 1009* runs for his local cricket team. After a few years, his father has decided to return the scholarship he received, since he has not performed up to expectations (read it here). It was important that when an event of this nature (an extraordinary performance) occured, one needs to not just reward, but also invest in nurturing the talent. Without an adequate support structure to hone his talent, the financial reward was insufficient to sustain even acceptable performance.

So why do some firms perform better than others?

The answer may not lie in analyzing why those performed better, but in understanding what the others do that make them not perform as well as the high performers; longitudinal and cross-sectional (big) data on multiple firms’ performance; and being very cautious about making causal assertions. Isn’t this the core of strategy research, today?

Cheers!

(c) 2017. Srinivasan R