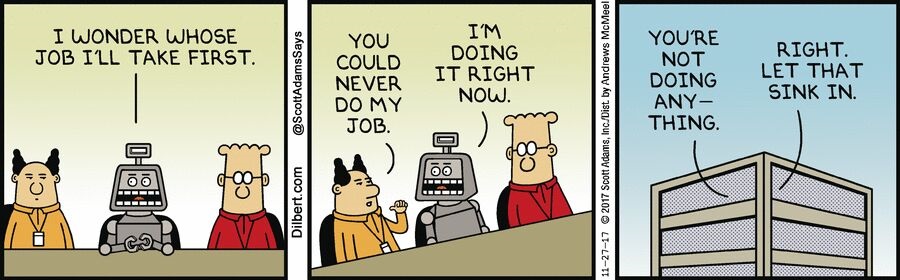

This is a follow-up post on my post last week on Moravec’s Paradox in AI. In that post, I enumerated five major challenges for AI and robotics: 1) training machines to interpret languages, 2) perfecting machine to man communication, 3) designing social robots, 4) developing multi-functional robots, and 5) helping robots make judgments. All of this was focused on what the programmers need to do. In this short post, I draw implications on what organisations and leaders need to do to integrate AI (and for that matter, any hype-tech) into their work and lives.

Most of the hype around technologies is built around a series of gulfs, gulfs of motivation, cognition, and communication. They are surely related to each other. Let me explain these in the reverse order.

Three gulfs

The first gulf is the communication gap between developers and managers. Developers know how to talk to machines. They actively codify processes and provide step-by-step instructions to machines to help them perform their tasks. Managers, especially the ones facing consumers, speak stories and anecdotes, whereas developers need precise instructions that could be translated into pseudo-code. For instance, a customer journey to be digitalised need to go through a variety of steps. Let me give you an example of a firm that I worked with. A multi-brand retail outlet wanted to digitalise customer walk-ins and help guide customers to the right floor/ aisle. Sounds simple, right? The brief to the developers was, to build a robot that would “replace the greeter”. The development team went around building a voice activated humanoid robot that would greet a customer as she walked in, asked her a set of standard questions (like ‘what are you looking for today’?) and respond with answers (like, ‘we have a lot of new arrivals in the third floor’). The tests were very good, except that the developers did not understand that only a small proportion of their customers were arriving alone! When customers came as couples, families, or groups, the robot treated them like different customers, and tried responding to each other separately. What made things worse, was that the robot could not distinguish children’s voices from female voices and greeted even young boys as girls/ women. The expensive project remains a toy today in a corner of the reception, only to witness the resurgence of plastic-smiling greeters. The entire problem could have been solved by a set of interactive tablets … Just because the managers asked the developers to “replace the greeter”, they went about creating an over-engineered but inadequate humanoid. The reverse could also happen, where the developers only focus on the minimum features that would make the entire exercise useless. For us to bridge this gulf, we either train the managers to write pseudo-code, or get the developers visualise customer journeys.

The second gulf is that of algorithmic and creative thinking. Business development executives and strategy officers think in terms of stretch goals and focus on what is expected in the near and farther future. On the other hand, developers are forced to work with technologies in the realm of current possibilities. They refer to all these fuzzy language, aspirational goals and corporatese as “gas” (to borrow a phrase from Indian business school students). The entire science and technology education at the primary and secondary school is about learning algorithmic thinking. However, as managers gain experience and learn about the context, they are trained to think beyond algorithms in the name of creativity and innovation. While both creative thinking as well as algorithmic thinking are important, the difference accentuates the communication gap discussed above.

| Algorithmic thinking is a way of getting to a solution through the clear definition of the steps needed – nothing happens by magic. Rather than coming up with a single answer to a problem, like 42, pupils develop algorithms. They are instructions or rules that if followed precisely (whether by a person or a computer) leads to answers to both the original and similar problems[1]. | Creative thinking means looking at something in a new way. It is the very definition of “thinking outside the box.” Often, creativity in this sense involves what is called lateral thinking, or the ability to perceive patterns that are not obvious. Creative people have the ability to devise new ways to carry out tasks, solve problems, and meet challenges[2]. |

The third gulf is that of reinforcement. Human resource professionals and machine learning experts use the same word, with exactly similar meaning. Positive reinforcement rewards desired behaviour, whereas negative reinforcement punishes undesirable behaviour. Positive and negative reinforcements are integral part of human learning from childhood; whereas machines have to be especially programmed to do so. Managers are used to employ reinforcements in various forms to get their work done. However, artificially intelligent systems do not respond to such reinforcements (yet). Remember the greeter-robot that we discussed earlier. Imagine what does the robot do when people get surprised and shocked, or even startled as it starts speaking? Can we programme the robot to recognise such reactions and respond appropriately? Most developers would use algorithmic thinking to programme the robot to understand and respond to rational actions from people; not emotions, sarcasms, and figures of speech. Natural language processing (NLP) can take us some distance but to help the machine learn continuously and accumulatively requires a lot of work.

Those who wonder what happened!

There are three kinds of people in the world – those who make things happen, those who watch things happen, and those who wonder what happened! Not sure, if this is a specific quote from a person, but when I was learning change management as an eager management student, I heard my Professor repeat it in every session. Similarly, there are some managers (and their organizations) wonder what happened when their AI projects do not yield required results.

Unless these three gulfs are bridged, organizations cannot reap adequate returns on their AI investments. Organizations need to build appropriate cultures and processes that bridge these gulfs. It is imperative that leaders invest in understanding the potential and limitations of AI, whereas developers should appreciate business realities. Not sure how this would happen, when these gulfs could be bridged, if at all.

Comments and experiences welcome.

Cheers.

© 2019. R

Srinivasan, IIM Bangalore.

[1] https://teachinglondoncomputing.org/resources/developing-computational-thinking/algorithmic-thinking/

[2] https://www.thebalancecareers.com/creative-thinking-definition-with-examples-2063744